Hey, Carlos again.

This week we're going to go into what A/B testing is, why it matters for PMs (yes, even the non-technical ones!) and take a look at how Netflix aces personalization through A/B testing.

All PMs know that endless testing is all part of building great digital products, but running great tests takes work. If you’re passionate about reaching your conversion goals, improving your user experience, and increasing revenue, you can get up to speed on A/B testing here!

What is A/B Testing?

A/B testing involves splitting your audience/user base into two groups. This can be done by running a 50/50 split test if your user base is currently very small, or taking a sample size from a larger user base.

The two groups then receive two versions of a feature. It could be something as small as a different call to action button color, or it could be a re-ordering of your onboarding sequence.

After a certain amount of time (which will differ depending on the test/product/goal etc) a product manager will look at the data gathered to better understand which version should be rolled out to the entire user base.

This is the simplest way of testing, but you might also choose multivariate testing (which we’ll get to shortly).

The point of running a test is to figure out how best to improve your product. You might come up with a hypothesis for an improvement, or not be able to decide between Button A and Button B. The only way to make an informed, data-driven decision is through testing.

Why Does it Matter for Product Managers?

A/B testing is used broadly across a number of disciplines, so it’s useful for many professionals. But it’s absolutely essential for product managers! A/B testing is one of the most asked-for skills by recruiters, and there’s hardly a PM out there who won’t be involved in testing at some point during their career.

So as a professional, you’ll definitely need to know how to plan, execute, and monitor an A/B test, as well as knowing what to do with the test results. It’ll be you who needs to turn the data into insights.

What about non-tech PMs?

Even for non-technical PMs, A/B testing is an important part of the job and needs to be one of the most well-developed tools in your toolbox. The more technical you are, the more involved you’ll be with the deployment and implementation of your test variations.

A non-technical PM will simply leave more of the tech work to the engineers. But you need to know how to plan a test, and what to do with the data when the test is over. Often it’ll also be your call to run to test in the first place, so you’ll be the one who needs to recognize when a test would be worth doing.

Not having a technical background is no excuse for not being an awesome PM! Find out why.

A/B Testing Case Study: Netflix

Netflix is well known for being a great A/B testing playground! Every single feature has been tested rigorously, and your own feed is constantly running experiments. For example, you may have noticed that titles are displayed with different images. One day it’s Captain America in the preview for The Avengers, and the next it’s Black Widow. That’s the algorithm figuring out which character users are most likely to click on.

If you’re a person who likes movies with strong female leads, the algorithm learns to show you similar movies, and to always highlight the female talent in the content.

Netflix has a long history of running A/B tests. And these tests often reveal surprising information that the teams wouldn’t have anticipated.

Gibson Biddle, former VP of Product at Netflix, spoke at #ProductCon on how Netflix uses testing to find new ways to delight customers. In the early days when they still rented out DVDs, customers cited having to wait for the latest release as their main point of dissatisfaction.

Netflix hypothesized that if they solved this problem, user retention would increase. They rolled out a very expensive test in order to see what would happen if they gave customers exactly what they wanted. It seems like a no brainer – give the people what they want and they’ll be happier. It turns out, spending millions to give the people what they want only increased retention by 0.05%, about 5000 users at the time.

$1 million to rollout, to save 5000 users, with a lifetime value of roughly $100 each. Now that’s a no brainer!

Start A/B Testing: The Steps You Need to Take

1. Develop a hypothesis

The first step is to determine what it is you’re trying to find out. Even if you think you already know the why behind the test, if you don’t get this part 100% right, the test will be a waste of time.

This step will include deciding what you’re trying to test, but also how you’ll be measuring the results. You don’t want to run a test and realize that the results are untrackable.

Testing isn’t done wildly and blindly – you need to base your hypothesis on real pain points within your product. Find something that needs fixing, and think of ways to potentially fix it.

For example, if you find that customer churn is high for users who use a certain feature, look at that feature and hypothesize what might increase retention.

2. Set the parameters for the test

This is where you’ll design your test. You need to decide which segments of users you’ll be targeting with the test, how long for, and work out the details of exactly what the test will look like. Working collaboratively here will be your greatest asset, and the more eyes you have on the test, the better. Within reason, of course. Nobody wants too many cooks in the kitchen!

You’ll also need to work out the operational costs of the tests and compare that with the potential gains from successfully improving the feature. If a test will cost you hundreds of thousands to run, with the potential to only save you a hundred…it’s not a worthwhile investment.

Before you deploy the test, you need to make sure it meets the minimum acceptance criteria. Every test in your backlog needs to have a detailed:

Hypothesis

Definition

Implementation plan

Expected outcome

Once you’ve met the minimum criteria, you’re ready to deploy!

3. Deploy the test

The parameters of the test may change. After all, PMs have to be ready for anything! You might reach your target for the amount of data you want to collect halfway through your allotted run time. Then it’ll be your call whether to keep the test running anyway, or stop it early and save resources.

Prioritizing A/B tests

While tests sit in a separate backlog to your regular product backlog, you can organize them in a very similar way. By using the same prioritization techniques used for your regular backlog, you can best understand which test should be run and when.

Check out: 3 Prioritization Techniques Every Product Manager Should Know

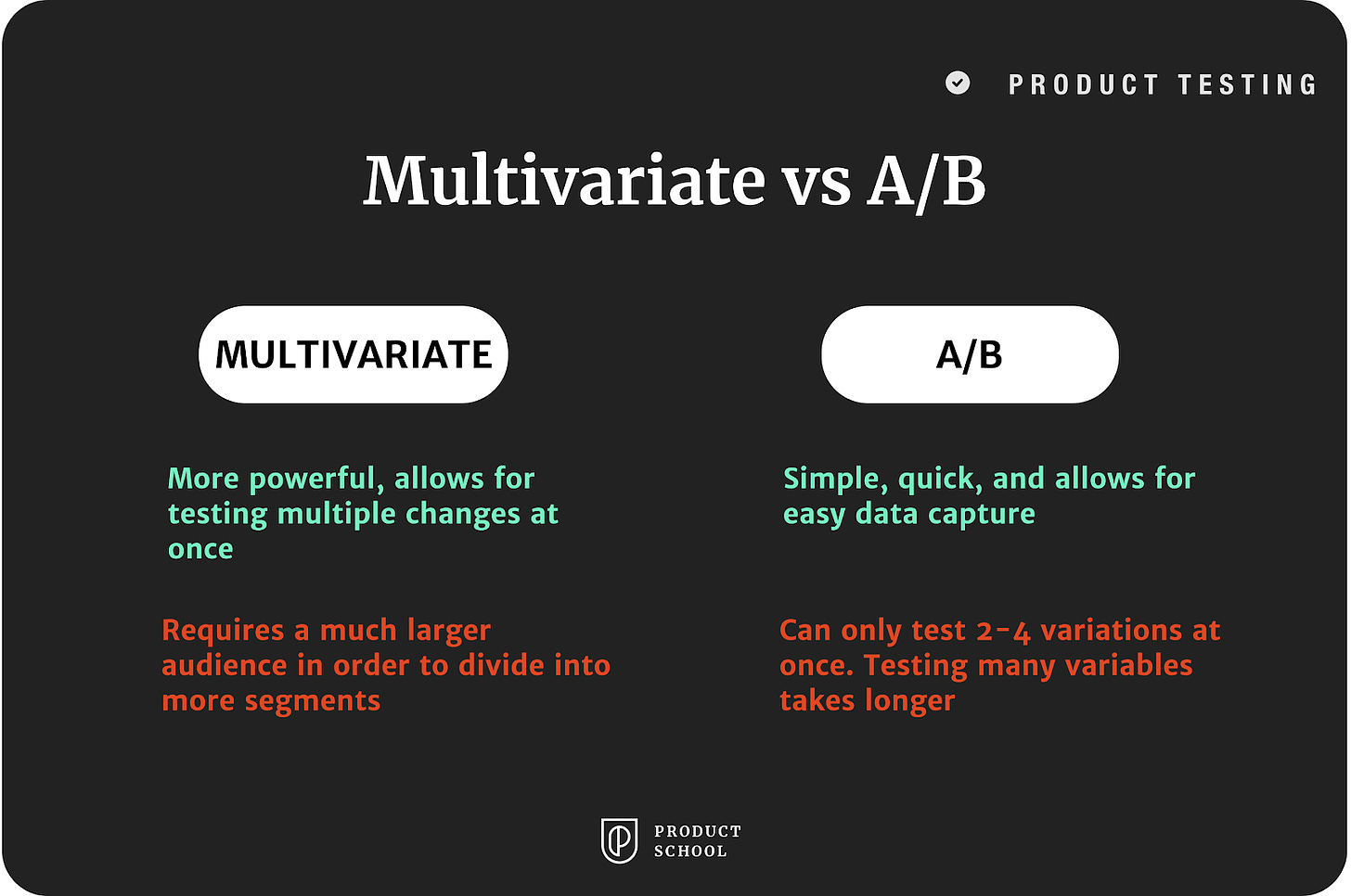

A/B Testing vs Multivariate Testing

One of the choices you might have to make is between A/B testing and multivariate testing. Multivariate testing is the same, in theory, as A/B testing, but with more than two variations.

Multivariate testing and A/B testing shouldn’t be thought of as opposites – rather two different ways of approaching the same problem – that you need to test your ideas!

Multivariate testing is great if you have many variables to test at once, and if you have a large enough user base to be able to create multiple segments.

What to Do With The Data

The biggest mistake you can make after running an A/B test, is to just pick the winner and forget about the test. “OK, the people like the blue button more than the green. So we use the blue button and forget the test ever happened.” Sure, you’ve got yourself a winning button, but now you’re throwing out valuable information.

Testing is about digging deep and understanding the reason why. Your users have given you more information about themselves, and to serve them properly you need to take advantage of that. The data you gain might not be so simple, even if the test itself is.

You should also make sure that the data is available to anyone who may be interested in it. You never know what insights other teams could gather from it.

A/B Testing Tools

Choosing your A/B testing tool can feel a little overwhelming when there are so many to choose from! To keep it simple, we’ve whittled it down to our two favorite picks.

If you’re a larger organization, we recommend Optimizely. Their goal is to help you, the product manager, to build better products through experimentation. Working with big brands like Sony and eBay, you can certainly trust Optimizely with your data, and to help you deploy powerful tests and manage the insights you learn from them.

If you’re building something for yourself, or are on a very tight budget, plenty of developers like to keep it simple with Google Experiments. While a little less user-friendly and not as powerful as Optimizely, it’s still well-loved by more technical product managers.

Got anything interesting to add? Share your thoughts in the comment section below.

Don’t forget to check out some of the previous issues!

Product Management Skills: Needfinding